Yesterday we received an invitation to sign up to a beta of EC2: Elastic compute cloud, the new service from Amazon to provide virtual servers integrated with their S3 service.

When I heard the first time about EC2, I thought immediately on those slow, overpopulated servers, where users battle for the resources of a few processors and a few gigabytes of memory. Yeah, you can have a server for $10 a month, but that is hardly enough for a personal blog. But as my passion for online games to beta test them, I love to beta test Amazon stuff, is always refreshing and ahead of the rest! Needless to say, if Amazon decides to release a service like this, is because is proud enough of it.

How it works?

Is basically something like a repository of file system images (Stored in S3) that can be installed and mounted into a virtual server on demand, in less than two minutes. You can upload your own images and make a virtual server of your web server, your database, or whatever you want. Thanks to a powerful (and safe) set of tools to create instances of those servers, creating clusters has never been that easy! Once the image has been instantiated on a server, you can access there using SSH and configure the firewall to allow traffic to some ports.

For every hour (or fraction) that you have your instance turned on, they charge $0.10 plus $0.20 per GB of traffic outside the EC2 network plus $0.15 per gigabyte/month used in S3. It will not be a $10 virtual server but neither the costs/risks of a dedicated server.

What can we load there?

They state that you can load any distribution as long as is compatible with kernel 2.6. And they actively support Redhat Fedora 3 and 4. I tried to make my image of a Debian, but I was not able to after creating one with debootstrap. I´m not a big systems guru, so probably I forgot some step that made that image unbootable. The sad thing is that I lost 2 hours to build, compress and upload the image, for nothing. Following their guidelines I managed to build an image copy of a fedora that worked nicely.

It is persistent the information that we store in that server?

Sadly the answer is: only meanwhile is turned on. If you want to have some permanent file system you have overuse S3 or use a distributed file system within other instances. They also state that, although your images are safe in S3, instances can fail and you would lose all the files stored in that instance (databases, files, configs, logs, …). There is a tool to make an image of your system while turned on, and send it as an image to S3 ready to be used again or to create a new instance.

How fast is it?

My feeling is just a bit slower than a real dedicated server. It feels fast, and it feels uncapped.

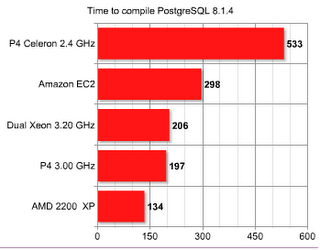

I’ve made a small benchmark of a few systems I have around the world to see how much time takes to compile a PostgreSQL 8.1.4.

Here are the results:

Even when is 1/3 slower than a dual Intel Xeon or a P4 with a 64 bits optimized kernel, it just means that we need to forget the word “virtual” when talking about speeds. Those are quite real speeds.

Surprisingly, our old AMD with FreeBSD rocks! Not even when the CPU is clocked at 1.80 GHz and its 3-4 years old.

What do I get?

Basically, when your budget can't pay reliability, EC2 is the answer! Forgive that storage is volatile, that can be solved easily making a shared, distributed file system. Once a system works, you make an image and it will always work, and you can clone it as many times as you want. You even have a parameter when you instantiate an image to tell the number of them you want! “Today I´m going to eat 8 web servers, 4 database and 2 application servers”. No one can beat that efficiency in less than 2 minutes, on demand.

Some curiosities…

Not surprisingly, the CPUs are Amd Opterons 250 (or that is what appears on /proc/cpuinfo). What I still don’t know is how it works internally. Is just a CPU assigned to my instance or they are really doing virtualization of a bigger cluster?

The servers come with a gentle amount of memory: 1.75 GB, and plenty of “temporally” hard disk space: 160 GB.

All the tools for building the file system images, signing and publishing them to S3 are made with Ruby.

I’m not really sure, but looks like you can't assign an static IP address to a server. Neither is guaranteed that an image will receive always the same IP address.

Connectivity of the servers is extremely good: ping to google.com is 2ms!

The test

To see how it works with real stuff, I made an exact copy of our Pricenoia server on a EC2 virtual server:

http://domU-12-31-33-00-02-6A.usma1.compute.amazonaws.com/

Even when I see clearly that is a lot faster than our dedicated server, sometimes has some hang-ups for a second or two. I've had still no chance to locate the problem, but looks a bit odd. Actually, that is the only thing that worries me about EC2.

327 comentarios:

1 – 200 de 327 Más reciente› El más reciente»夏真っ盛り!女の子は開放的な気分で一人Hしたくてウズウズしてるっ!貴方は女の子のオ○ニーを見て気分を高めてあげてネ!もちろん、お手伝いしてもオッケーだよ!さぁ、今すぐ救援部にアクセスしよっ

女性会員様増加につき、当サイトの出張ホストが不足中です。女性の自宅やホテルに出向き、欲望を満たすお手伝いをしてくれる男性アルバイトをただいま募集していますので、興味のある方はTOPページから無料登録をお願いいたします

最近様々なメディアで紹介されている家出掲示板では、全国各地のネットカフェ等を泊り歩いている家出少女のメッセージが多数書き込みされています。彼女たちはお金がないので掲示板で知り合った男性とすぐに遊びに行くようです。あなたも書き込みに返事を返してみませんか

オ○ニーライフのお手伝い、救援部でHな見せたがり女性からエロ写メ、ムービーをゲットしよう!近所の女の子なら実際に合ってHな事ができちゃうかも!?夏で開放的になっている女の子と遊んじゃおう

メル友募集のあそび場「ラブフリー」はみんなの出逢いを応援する全国版の逆援助コミュニティーです!女の子と真剣にお付き合いしたい方も、複数の女性と戯れたい方も今すぐ無料登録からどうぞ

簡単にお小遣い稼ぎをしたい方必見、当サイト逆¥倶楽部では無料登録して女性の性の欲求に応えるだけのアルバイトです。初心者でもすぐに高収入の逆¥交際に興味をもたれた方はTOPページまでどうぞ。

サイト作成は初めてでぇす。プロフは友達も作ってたので私も頑張って作成しました。プロフもってる人はメル友になって見せ合いっこしませんか?メアドのせてるので連絡ください。love-friend0925@docomo.ne.jp

男性が主役の素人ホストでは、女性の体を癒してあげるだけで高額な謝礼がもらえます。欲求不満な人妻や、男性と出逢いが無い女性が当サイトで男性を求めていらっしゃいます。興味のある方はTOPページからどうぞ

これから家出したい少女や、現在家出中の娘とそんな娘と遊びたい人を繋げるSOS掲示板です。家庭内の問題などでやむなく家出している子が多数書き込みしています。女の子リストを見て彼女たちにメールを送ってみませんか

あなたのSM度をかんたん診断、SM度チェッカーで隠された性癖をチェック!真面目なあの娘も夜はドS女王様、ツンデレなあの子も実はイジめて欲しい願望があるかも!?コンパや飲み会で盛り上がること間違いなしのおもしろツールでみんなと盛り上がろう

男性なら一人くらいは作ってみたいセフレですが、実は女性もいつでもSEXしたいときにできる友達がほしいと思っているのです。そのような彼女たちの欲求を満たしてあげませんか

女性向け風俗サイトで出張デリバリーホストをしてみませんか?時給2万円の高額アルバイトです。無料登録をしてあとは女性からの呼び出しを待つだけなので、お試し登録も歓迎です。興味をもたれた方は今すぐどうぞ。

「家出してるんで、泊まるところないですか?」家出掲示板には毎日このような女の子からの書き込みがされています。彼女たちは家やホテルに泊まらせてあげたり、遊んであげるだけであなたに精一杯のお礼をしてくれるはずです

SM度チェッカーで隠された性癖をチェック!外見では分からない男女のSM指数をチェックして相性のいい相手を見つけ、SMプレイしてみよう!合コンや飲み会で盛り上がること間違いなしのおもしろツールをみんなとやってみよう

女の子達のH告白、日々のマル秘映像や写真は要チェック!!今すぐ無料参加してアダルトSNSを始めよう!!うまくいけば恋人やセフレをゲット出来るかも知れないチャンス

性欲のピークを迎えたセレブ熟女たちは、お金で男性を買うことが多いようです。当、熟女サークルでは全国各地からお金持ちのセレブたちが集まっています。女性から男性への報酬は、 最低15万円からとなっております。興味のある方は一度当サイト案内をご覧ください

プロフ見た感想を携帯アドの方に送ってください。悪口は気が病むので止めておいて欲しいですjewely.jmtjd@docomo.ne.jp

ネットで恋人探しなら、グリーをおすすめします。ここからあなたの理想の恋愛関係がはじまります。純粋な出会いから、割り切ったエッチな出会いまで何でもあります。ミクシーから女の子が大量流入中!ココだけの話、今が狙い目です

当サイトは、みんなの「玉の輿度」をチェックできる性格診断のサイトです。ホントのあなたをズバリ分析しちゃいます!玉の輿度には、期待以上の意外な結果があるかも

女の子のオ○ニーを見るだけで稼げる救援部では、見るだけで3万円、お手伝いしてあげると5万円の報酬となります。業界一の女性会員数を誇る当サイトで女性会員様の性の願望を救援してあげて下さい

モバゲー発の友達探しコミュニティー、出逢い広場は簡単な無料登録するだけで使い放題でメンバー同士、気軽にメッセージのやり取りが出来るよ!モバゲー好きの女の子と出逢いのチャンスがあるかも!?詳しくはTOPページにアクセスしてみよう

セレブラブでは心とカラダに癒しを求めるセレブ女性と会って頂ける男性を募集しています。セレブ女性が集まる当サイトではリッチな彼女たちからの謝礼を保証、安心して男性はお金、女性は体の欲求を満たしていただけます。興味がある方は当サイトトップページからぜひどうぞ

携帯アドのせておきました。恥ずかしい写真とか乗せてるけど、許してください。ネット友達探してるのでよかったら連絡ください。for-a-sweetheart@docomo.ne.jp

ゲイの数が飛躍的に増えている現代、彼らの出逢いの場は雑誌やハッテン場からネットに移り変わってきています。当サイトは日本最大のゲイ男性の交流の場を目指して作られました。おかげさまで会員数も右肩上がりに伸びています。ゲイの方や興味のある方はぜひ当サイトをご覧ください。

当サイト、オ○ニー救援部では無料でオナ動画を見ることができます。また、ライブチャット機能でリアルタイムオ○ニーを見るチャンスも高く、興奮間違いなしです。また、一人Hのお手伝いを希望される女性もあり、お手伝いいただけた方には謝礼をお支払いしております

簡単な設問に答えるだけで貴方にふさわしい名言がわかる、名言チェッカー!あなたの本当の性格を見抜けちゃいます。世界の偉人達が残した名言にはどことなく重みがあるものです

家出した少女たちは今晩泊る所がなく、家出掲示板で遊び相手を探しているようです。ご飯をおごってあげたり、家に泊めてあげるだけで彼女たちは体でお礼をしてくれる娘が多いようです

復活、スタービーチ!日本最大の出会い系がついに復活、進化を遂げた新生スタービーチをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

さびしい女性や、欲求不満な素人女性たちを心も体も癒してあげるお仕事をご存じですか?女性宅やホテルに行って依頼主の女性とHしてあげるだけで高額の謝礼を手に入れる事が出来るのです。興味のある方は当サイトTOPページをご覧ください

最近いい事ない人集合!話聞いて欲しいって時ないですか?やけに寂しいんですよね。私も聞くので私のも聞いてください。メアド乗せておくのでメールから始めましょうfull-of-hope@docomo.ne.jp

性欲を持て余し、欲求不満になっている女性を金銭の対価を得て、癒して差し上げるお仕事です。参加にあたり用紙、学歴等は一切問いません。高額アルバイトに興味のある方はぜひどうぞ

グリーで広げよう、掲示板の輪!グリーから飛び出た出会いの掲示板が楽しめるのはここだけ、無料登録するだけで友達・趣味トモ・恋人が探せちゃいます

パーティーや合コンでも使える右脳左脳チェッカー!あなたの頭脳を分析して直観的な右脳派か、理詰めな左脳派か診断出来ます。診断結果には思いがけない発見があるかも!みんなで診断して盛り上がろう

救援部ではHな女の子のオナ写メが無料で見れちゃいます。また好奇心旺盛でいろんな事をしてみたい女の子たちが自分の一人Hを手伝ってくれる男性を探しています。ここでヤればヤるほどキレイになると信じている女の子達と遊んでみませんか

野外露出の掟・・・それはいかに通報されないで脱ぐかですが、合法的に露出プレイを楽しめる方法があるのをご存じですか?当サイトで露出パートナーを探したりプレイ出来る場所を提供を探したり出来るのです。興味のある方はどうぞ

女性会員様増加につき、出張ホストのアルバイトが不足中です。ホテルや女性の自宅に出向き、彼女たちの欲望を満たすお手伝いをしてくれる男性アルバイトをただいま募集しております。興味のある方はTOPページをご覧ください

美容院いってきた記念に写メを更新しました。結構気に入ってるんですけどどうですか?メール乗せておくのでメッセお待ちしてるなりmiracle.memory@docomo.ne.jp

最近雑誌やTVで紹介されている家出掲示板では、全国各地のマンガ喫茶等を泊り歩いている家出少女のメッセージが多数書き込みされています。彼女たちはお金がないので掲示板で知り合った男性の家にでもすぐに遊びに行くようです。あなたも書き込みに返事を返してみませんか

人生において君は、勝ち組なのか負け組なのか!これからの将来を診断する勝ち組、負け組チェッカーをあなたも体験してみない?勝ち組になりたいのならココで診断してて損はない♪みんなでやっても大盛り上がりの勝ち組、負け組チェッカーはココから今すぐ診断

高級チェリーの秋は童貞卒業のシーズンです。童貞を食べたい女性達もウズウズしながら貴方との出会いを待っています。そんなセレブ達に童貞を捧げ、貴方もハッピーライフをってみませんか

童貞を奪ってみたい女性たちは、男性にとって「初体験」という一生に一度だけの、特別なイベントを共に心に刻み込むことを至上の喜びにしているのです。そんな童貞好きな女性たちと最高のSEXをしてみませんか

プロフ作ったわいいけど見てくれる人いなくて少し残念な気分に陥ってます。意見でもいいので見た方がいましたら一言コメント送ってくだしゃいメアドのせているのでよろしくでしゅapotheosis@docomo.ne.jp

乱交パーティー実施サークル、「FREE SEX NET」では人に見られること、人に見せつける事が大好きな男女が集まり、乱交パーティーを楽しむサークルです。参加条件は「乱交が好きな18歳以上の健康な方」です。興味がある方はぜひ当サイトをご覧ください

全国各地の名うての盗撮のプロたちが自身のコレクションを密かに交換する、完全会員制・盗撮掲示板。門外不出のここでしか見られないお宝ばかりです。話題のhaman動画よりヤバイ動画をゲットしよう

あなたの秘められた精神年齢をチェックできる診断サイトです。ここであなたの実際の精神年齢が簡単な質問でわかっちゃいます。普段は子供っぽいあの人も実は大人の思考の持ち主で子供っぽく振舞ってるだけかも知れませんよ

家出している女の子と遊んでみませんか?彼女たちはお金に困っているので、掲示板で知り合ったいろんな男の家を泊り歩いている子も多いのです。そんな子たちとの出逢いの場を提供しています

ネットで恋人探しなら、greeをおすすめします。ここからあなたの理想の恋愛関係が始まります。純粋な出逢いから、割り切ったHな出逢いまで何でもあります。ミクシーから女の子が大量流入中!ココだけの話、今が狙い目です

出会ぃも今は逆¥交際!オンナがオトコを買う時代になりました。当サイトでは逆援希望の女性が男性を自由に選べるシステムを採用しています。経済的に成功を収めた女性ほど金銭面は豊かですが愛に飢えているのです。いますぐTOPページからどうぞ

世界の中心で貴方を叫ぶような恋がしたいんです。愛に飢えているゆいと恋バナ話ませんか?メアドのっけてるので気になる方は連絡頂戴ねuna-cima@docomo.ne.jp

今までの人生経験を診断できる人生の値段チェッカー!経験豊富な君の人生は一体何点なのか?みんなでやれば超楽しい、芸能人達もやってる人生の値段チェック!テレビや口コミで広がっている人生の値段チェッカーをあなたも体感してみよう

女性向け風俗サイトで出張ホストをしてみませんか?時給2万円以上の高額アルバイトです。無料登録をしてあとは女性からの呼び出しを待つだけでOK、お試し登録も歓迎です。興味をもたれた方は今すぐどうぞ。

友達の前では少し強がって彼氏なんかいらないって言ってしまうけど、やっぱ本音では欲しいです、夜は寒いし寂しいし私の本音に気付いてください。メアド乗せておくので優しい方連絡くださいtoward.the-future@docomo.ne.jp

人生の値段を診断してみませんか?自分の価値を診断してあなたの生涯年収、人間としての価格が丸裸になります。友達と一緒にチャレンジして絆を深めよう

流出からハメ撮りまでマニアも満足のエロ動画満載、抜きたくなったらチャットでサックと約束、有無を言わさずサックと中出し、便器女を簡単get出来るサイトです

セレブと言われる世の若妻は男に飢えています、特に地位が邪魔して出会いが意外と少ないから、SEXサークルを通じて日頃のストレス発散に毎日男を買い漁っています。ここは彼女達ご用達の口コミサイトです

一人で家出したんだけど助けてほしいです。今まで強がってました。もう親には頼れない…super-love.smile@docomo.ne.jp

激レア映像!芸能人のお宝映像を一挙公開中!無料登録するだけでレアなお宝映像やハプニング画像が取り放題、期間限定の動画も見逃せない

大人気、動物占いであなたの秘められた野性がわかる!草食系と思っていたあの子も実は肉食動物かも知れない!意外性のある占いをみんなで楽しもう

仕事を辞めてください。一日で今の月収を超えるお誘いがあります。某有名セレブ熟女の強い要望により少しの間、恋人契約という女性からのお申し込みがありました。今までは地位や名誉のために頑張ってこられたようでございますが年齢を重ね、寂しさが強くなってきたようでございます。男性との時間を欲しがっている女性に癒しを与えてくださいませ

エロアニメナビ・エロ漫画好きにはたまらないお宝満載!激アツなサイトだけを選りすぐりました。女の子にも男の子にも使いやすく無料で出会えるサイトばかりを掲載しています

1人Hのお手伝い、救援部でHな見せたがり女性からエロ写メ、ムービーをゲットしよう!近所の女の子なら実際に合ってHな事ができちゃうかも!?開放的な女の子と遊んじゃおう

ストーカーの追い回されて怖いんです。毎日夜になると非通知電話多いし怖い。。。助けてくださいpeach-.-girl@docomo.ne.jp

復活、スタービーチ!日本最大の友達探しサイトがついに復活、進化を遂げた新生スタービーチをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

「友達の中で処女なのは私だけ…でも恥ずかしくて処女だなんて言えない、誰でもイイからバージンを貰ってほしい!」そんな女性が沢山いる事をご存じですか?出合いが無かった、家が厳格だった等の理由でHを経験したことがない女性がたくさんいるのです。当サイトはそんな女性たちと男性を引き合わせるサイトです

メアド開運、あなたの使ってるメアドを診断出来ちゃうサイト!吉と出るか凶と出るかはあなた次第、普段使ってるメアドの金運、恋愛運が測定できちゃいます

今話題の逆¥交際!あなたはもう体験しましたか?当サイトでは逆援希望の女性が男性を自由に選べるシステムを採用しています。成功を収めた女性ほど金銭面は豊かですが愛に飢えているのです。いますぐTOPページからどうぞ

只今、シャープ32型液晶テレビ、PS3等、豪華商品が当たるキャンペーンを実施中!まずは欲しい商品を選び、メールアドレスを登録して無料エントリー!その場で当たりが出たら賞品ゲットできます。抽選に外れた方もWチャンスで商品券等が当たります。ぜひチャレンジして下さい

一夜限りの割り切ったお付き合いで副収入が得られる交際サイトのご案内です。アルバイト感覚での挑戦もできる、安心の無料登録システムを採用しておりますので、興味のある方は当サイトへぜひどうぞ

彼氏にDVされて、ちょっと男性不信です。でも、恋愛して彼氏も作りたいと思ってるので、優しく接してくれる人を探してます。連絡待ってまぁす! pretty-toy-poodle@docomo.ne.jp

家出をして不安な少女たちの書込が神待ち掲示板に増えています。一日遊んであげたり、家に招いて泊まらせてあげるだけで、彼女たちはあなたに精一杯のお礼をしてくれるはずです

全国乱交連盟主催、スワップパーティーに参加しませんか?初めての方でも安心してお楽しみいただけるパーティーです。参加費は無料、開催地も全国に120ヶ所ありますので気軽に参加してお楽しみ下さい

SM・露出・スワッピング・レズ・女装・フェチなど…普通じゃ物足りないあなたが思う存分楽しめる世界!貴方だけのパートナーを探してみませんか?アブノーマルでしか味わえない至福の時をお過ごしください

セレブラブでは毎月10万円を最低ラインとする謝礼を得て、セレブ女性に快楽を与える仕事があります。無料登録した後はメールアプローチを待つだけでもOK、あなたも当サイトで欲求を満たしあう関係を作ってみませんか

簡単に大金を稼ぐことができる裏バイトがあります。女性が好きな方、健康な方なら日給3万円以上の収入を得ることも可能です。興味のある方はHPをご覧ください

一人Hを男性に見てもらうことで興奮する女性が多数いることをご存じですか?当サイト、救援部ではそんな女性たちが多数登録されています。男性会員様は彼女たちのオ○ニーを見てあげるだけで謝礼を貰えるシステムとなっております。

冬に1人ボッチで家でご飯とかオヤスミなんて寂しすぎるょ~~っ!こんなところに書き込んだら削除されちゃいそうだけど少しでもきっかけ作らなくっちゃと思って書いてみましたっ!!気軽に会ったり出来たりする方ってこの掲示板見てませんか~!?良かったらメールくださいね★フリメだったら私気付けないんで携帯のアドレス乗せておくねっ!! love-sexy@docomo.ne.jp

失恋は心に深い傷を残します。その傷を癒す特効薬、それは新しい出会い。あなたの心を癒す、素晴らしい出会いを当サイトで見つけて、笑顔を取り戻してください

高額バイトのご案内です。欲求不満になっている女性を癒して差し上げるお仕事です。参加にあたり用紙、学歴等は一切問いません。高収入を稼ぎたい、女性に興味のある方はぜひどうぞ

スタービーチの突然の閉鎖、優良な友達探しサイトが無くなってしまいましたが、新生・スタービーチがここに復活しました!、進化を遂げた新生スタービーチをやってみませんか?理想の交際探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

「家出してるんで、泊まるところないですか?」家出救済神待ちサイトには毎日このような女の子からの書き込みがされています。彼女たちはホテルや家に泊まらせてあげたり、遊んであげるだけであなたに精一杯のお礼をしてくれるはずです

普通のプレイじゃ絶対味わえない快感、それは野外露出プレイ。最初は嫌がっていた女も次第にハマっていって、その内それが快感に変わってきます。野外露出プレイで興奮度アップ間違い無し

早い時期に結婚してしまって、少し後悔しているんです。私は、今の夫しか経験が無くって、このままでいいのかなって…。思うようになってきてしまって…冒険はしたいんですけど、やっぱりばれたりしたら怖いので…割り切りで会える方って居ませんでしょうか?連絡お待ちしてますね♪最初に年齢を教えてくれるとうれしいです。pop-music-lo-ve@docomo.ne.jp

Hな人妻たちの社交場、割り切った付き合いも当然OK!欲求不満のエロ人妻たちを好みに合わせてご紹介します。即会い、幼な妻、セレブ、熟女、SM妻、秘密、以上6つのジャンルから遊んでみたい女性を選んでください

1日5万円~が手に入るサイドビジネスのご案内です。男狂いのセレブ女性はネットで知り合った男を次々に金の力で食い散らかしています。そんな女性を手玉にとって大金を稼いでみませんか

みんなで楽しめるHチェッカー!簡単な設問に答えるだけであなたの隠されたH度数がわかっちゃいます!あの人のムッツリ度もバレちゃう診断を今すぐ試してみよう

最近流行の家出掲示板では、各地のネットカフェ等を泊り歩いている家出少女のメッセージが多数書き込みされています。彼女たちはお金がないので掲示板で知り合った男性の家にでもすぐに泊まりに行くようです。あなたも書き込みに返事を返してみませんか

性欲を持て余し、欲求不満になっている女性を金銭の対価を得て、癒して差し上げるお仕事です。参加にあたり用紙、学歴等は一切問いません。高収入アルバイトに興味のある方はぜひどうぞ

童貞を奪ってみたい女性たちは、男性にとって「初体験」という一生に一度だけの、特別なイベントを共に心に刻み込むことを至上の喜びにしているのです。そんな童貞好きな女性たちと高級チェリーで最高のSEXをしてみませんか

最近寂しくて困っています。夜一人で寝るのが凄く寂しいです…隣で添い寝してくれる男性いませんか?見た目とか特に気にしません。優しくて一緒にいてくれる方大歓迎☆一緒に布団で温まりましょう♪shart.enamorado.de-me@docomo.ne.jp

一晩の割り切ったお付き合いで副収入が得られるサイトのご案内です。アルバイト感覚での挑戦もできる、安心の無料登録システムを採用しておりますので、興味のある方は当サイトをぜひご覧ください

復活、スタービーチ!日本最大の友達探しサイトがついに復活、進化を遂げた新生スタビをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

なかなか彼氏、彼女が出来ない君達の深層心理を徹底解明♪みんなでモテる度チェックをやって結果交換も自由、合コンや休み時間はモテる度チェックで暇つぶし!次にモテ期が訪れる瞬間をズバリ診断しちゃいます

出会ぃも今は¥倶楽部!オンナがオトコを買う時代になりました。当サイトでは逆援希望の女性が男性を自由に選べるシステムを採用しています。経済的に成功を収めた女性ほど金銭面は豊かですが愛に飢えているのです。いますぐTOPページからどうぞ

今迄は野外露出がマイナスイメージと囚われがちですが、実際は開放的な気分になり有名人のヌーディストが、オープンになる事を推奨してるぐらいです。このサイトをキッカケに知り合った娘達と野外で楽しみませんか

どうしても相手がセレブだと高級感が有り、近付きにくいと思われがちですが、実際はただ欲望のままに快楽を追い求める、セレブとは掛け離れた女性が居るだけです。今こそ自分の欲望を満たすときです

最近旦那とマンネリで全然Hしてません。正直もうかなり欲求不満です…誰か相手してくれる方いませんか?空いている時間は多いと思うので都合は合わせやすいと思います。お互い楽しめる関係になりたいな。人妻でも平気な人いたら是非相手してください☆一応18歳以上の人限定でお願いします。上はどこまででも大丈夫なんで excellent.lady@docomo.ne.jp

当サイトでは無料でオナ動画を見ることができます。また、ライブチャット機能でリアルタイムオ○ニーを見るチャンスも高く、興奮間違いなしです。また、一人Hのお手伝いを希望される女性もあり、お手伝いいただけた方には謝礼をお支払いしております

日本最大、だれもが知っている出会い系スタービーチがついに復活、進化を遂げた新生スタビをやってみませんか?趣味の合う理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビで遊んでみよう

あなたの異性からのモテ度数を診断できる、モテる度チェッカー!日頃モテモテで充実しているあなたもそうでないニートの方も隠されたモテスキルを測定して結果を活用し、今以上にモッテモテになること間違いなし

女性のオナニーを助ける場が救援部です。ここに所属してる娘のオナニーを見て気に入ったら、実際に会ってオナニーを手伝っても良いし、エッチしても良し、これで報酬Get出来るんですから美味しいバイトですよ

一時代を築いたスタービーチは閉鎖になりましたが、もう一度楽しい思いをしたい、もう一度出会いたいと思う有志により再度復活しました。本家以上に簡単に出会えて楽しい思いを約束します

昨日友達とセフレを作るのが難しいな~、出会い系サイトの規制が厳しいはとか話してて、アダルトSNSのサイトが今のところは狙い目やって聞いたから使ってみたけど、近場で楽にセフレを作れてホンマ手軽やはwww

誰にも言えない秘密があります。実はとってもHなんです、せっかく女として生まれたからにはアブノーマルな世界に飛び込んでみたいです☆普段では考えられないプレイを思う存分楽しみ、経験したいんです♪快楽に溺れさせてくれませんか?一緒に感じ合いましょう!!都合はつくのですぐに時間を合わせられます。18歳よりも上の方がいいです!! quietness@docomo.ne.jp

流出サイトでは、有名人から素人までの他では見れない秘蔵の動画を入手しています。何より素人が相手の場合に限り、アポを採る事も可能です。動画でお手軽に抜いて、抜き足らない場合は、女の子にハメて来て下さい

日本最大級、だれもが知っている出会い系スタービーチがついに復活、グリーより面白い新生スタビをやってみませんか?趣味の合う理想のパートナー探し、合コンパーティーに興味がある方はぜひ無料登録してみてください。楽しかった頃のスタビで遊んでみよう

あなたのSM度を簡単診断、SM度チェッカーで隠された性癖をチェック!真面目なあの娘も夜はドS女王様、ツンデレなあの子も実はイジめて欲しい願望があるかも!?コンパや飲み会で盛り上がること間違いなしのおもしろツールでみんなと盛り上がろう

女性には風俗がない!そんな悩みを持つセレブ女たちは、リッチセックスでお金を使い自分を満たします。金銭面では豊かですが、愛に飢えている彼女たちを癒して高額な報酬を手に入れてみませんか

女子○生の個人情報公開!?遊び盛りの神待ち女子○生の写メ・アドレス・番号を公開中!好みの女の子を選んで直メ・直電で今すぐ待ち合わせしよう

高級チェリーの冬は童貞卒業のシーズンです。童貞を食べたい女性達もウズウズしながら貴方との出会いを待っています。そんな女性達に童貞を捧げ、貴方もハッピーライフを送ってみませんか

初書き込みで申し訳ないんですが、都合のいい男性探しています。不況の中でも会社が高成長してて、忙しい毎日です。お陰でプライベートが充実していなくって、溜まる一方です。財産的にも多少余裕が今のところあるのでお礼もできます。何より、この書き込みが読まれているのかちょっと怪しいですけど…。アドレス置いとくので、消されないうちにメールくれたら嬉しいです。inspiration.you@docomo.ne.jp

結婚してから女としての喜びを失った玉の輿女性達、エリート旦那のそつのない動きには満足できるはずもなく、時間を持て余すお昼時に旦那のお金を使い、出張ホストサービスを楽しむそうでございます。今回も10万円での愛を承っております。癒しの一時をご一緒して謝礼を貰ってくださいませ

だれもが知っている日本で一番有名な出会い系スタービーチがついに復活、greeより面白い新生スタビをやってみませんか?理想のパートナー探し、合コンパーティーに興味がある方はぜひ無料登録してみてください

当サイトは、みんなが玉の輿に乗れるかどうか診断できる性格診断のサイトです。ホントのあなたをズバリ分析しちゃいます!玉の輿度チェッカーの診断結果には、期待以上の意外な結果があるかも

男性との甘い一時が奥様達には必要になっております。刺激のない私生活はとても辛く、ココロもカラダもストレスが堪る一方、そんな中にデリバリーホストに癒しを求めている奥様、セレブ女性は大変増えてきており、男性の方が不足状態です。女性達の癒しとなる仕事をあなた様も一度体験してみてはいかがでしょうか

メル友らんどでは誰でも気軽にメル友が作れちゃう、参加無料でいつでも利用可能なコミュニティサイト♪ご近所の気の合うリア友や、真面目に彼氏彼女探しなど、楽しみ方は無限大!自分にぴったりの相手を見つけちゃおう

大好評の逆ナンイベントが毎週開催決定!素敵な出会いのきっかけ探し・アイナビにきませんか?積極的な出会いを求める人達なら無料参加OK!あなたもほんの少しの勇気で素敵な彼氏・彼女をGETしちゃおう

一人暮らし寂しいよ~(泣)誰かお家遊びにきてくれないかなぁ?休みの日とかも全然予定ありません。料理作るの得意だから来てくれたら食べてほしいな♪見た目は悪くないと思うから安心してください!(笑)細かい事は気にしないけど18歳未満の人は微妙かな、気軽に仲良くしてください milky-yukinko@docomo.ne.jp

冬に1人でネカフェとか寂しすぎやし、でも自分から積極的に声を掛けれる娘ばかりと違い、内気な娘は神待ちと言われるように自分の事を助けてくれるのを待ってるんです。貴方の優しさを待ってる娘は意外な程多いよ

あなたのご近所の女の子たちと無料でカンタンにであえる家出掲示板!大学生・専門学生、まさかの女子○生まで!ちょっとしたお小遣い稼ぎに全国の女の子たちが殺到中!ノーピンクからちょっぴりHなお誘いまで…自分に合ったコを選んでメッセしちゃおう

簡単な設問に答えるだけで貴方にふさわしい名言がわかる、名言チェッカー!あなたの本当の性格を見抜いちゃいます。世界の偉人達が残した名言にはどことなく重みがあるものです

1人Hを男性に見てもらうことで興奮する女性が多数いることをご存じですか?当サイトにはそんな女性たちが多数登録されています。男性は彼女たちの1人Hを見てあげるだけで謝礼を貰えるシステムとなっております

最高の遊び場、スタービーチ!日本最大の友達探しサイトがついに復活、モバゲーより面白い新生スタビをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

当逆円倶楽部ではリッチなセレブと割り切りでお付き合いしてくださる男性を募集しています。女性の性欲を満たし、高額報酬をもらって楽しく暮らしてみませんか?興味がある方はアルバイト感覚での1日登録もできる、安心の無料入会を今すぐどうぞ。

人妻だけどセフレ募集しちゃいます!こんな女嫌かなぁ?新しい刺激欲しいし旦那以外の男性としてみたいな。エッチのテクはそれなりに自信あるよ、フェラとか上手いってよく褒められます。年上は何歳まででもOKだけど年下は18歳までが限界かな、楽しみたい人いたら気軽によろしくね♪ enjoy-smile.of.happiness@docomo.ne.jp

家出中の少女たちの書込が神待ち掲示板に増えています。ご飯を食べさせてあげたり、家に招いて泊まらせてあげるだけで、彼女たちはあなたに精一杯のお礼をしてくれるはずです

セフレ専門出会い喫茶エンジョイラブは店舗型出会い喫茶 ENJOYグループのネット1号店としてオープンしました♪セフレ探しを目的とした出会いの専門店です。Hに満足していない女性達が多数登録。今すぐ即アポOK表示のHな女の子を新着順で紹介中です

熟女だって性欲がある、貴方がもし人妻とSEXしてお金を稼ぎたいのなら、一度当サイトをご利用ください。当サイトには全国各地からお金持ちのセレブたちが集まっています。女性から男性への報酬は、 最低15万円からと決めております。興味のある方は一度当サイト案内をご覧ください

mコミュで理想の恋人を見つけよう!某女性誌に紹介され、女の子の登録者が急増中です。新しい出会いの場としてあまり知られていない今ならメールの返信がすぐに返ってくるかも!?無料登録から始めてみよう

さびしがりやの素人女性や天然娘にメールやチャットで会話してあげるだけで儲かる新感覚のアルバイト「素人ホスト」!未経験者の方でも簡単、手軽に出来るお仕事です。詳細は当サイトでご確認ください

初めての書き込みでちょっぴり緊張してます、男の人と出会うきっかけがなくて!こう言う場をかりてみるのもひとつのきっかけですよね。周りの友達は彼氏とラブラブの毎日、あたしもラブラブな毎日を過ごしたい、21歳の恥ずかしがり屋なんで、年上で引っ張ってくれる人がいいです。メールしてくれたら返事は確実だよ♪ワクワクしながらメール待ってます love.love.happy-@docomo.ne.jp

復活、スタービーチ!日本最大のであい系がついに復活、進化を遂げた新生スタビをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

家出・神待ちサイト神風は家出少女が集まる人気サイトをクチコミから集めた家出サイト専用のリンク集です。風のように現れる神となってあなたも家出少女を救ってあげて下さい

ランク王国でもご紹介された右脳左脳チェッカー、天才肌を見分ける楽しい盛り上がりツールとして今、支持をうけております。みんなでやれば盛り上がる事は間違いなし診断結果でも全国ランキングなどにランクインされて面白さ倍増!話のネタに一度はどうぞ

ゴージャスなリッチセレブリティ達のアダルトコミュニティーサイト!お金と時間に優雅なセレブ女性達はアダルトコミュで男性との秘密交際を楽しんでいるのです

釦覀芻菣芯맣莑볣莈諣莼鋦躢鷣膆臨ꪰ苣膌藣膡飣膳鿣芹뿣莼鏣莼臣膮ꧦ뒻臯벁诣莥볣芹뿣莼鏣莼臣膧ꫤ붓鏣膮膂蓣芒鿦蒟韣膦诣膕

ゅめの*。ぉ部屋☆+.プロフ作りました♪彼氏募集中wバレンタイン前なのに→アソンでくれるヒトいなくて(泣)寂しいデス↓メアドを乗せておくので遊んでもイイヨ~ってヒト、メルちょうだいネ☆

飲み友探してまーす☆気軽に楽しく一緒に飲みに行きませんか?(*^_^*)そんなに強くはないけどね(*μ_μ) イイお店とか知ってたら案内してほしいです★時間は夜遅くでも空いてますよヽ(^o^)丿飲み友探しなんで二十歳以上の人限定ー(*・人・*) 年は23歳だよ!メールよろしくでーす! red-rose.ray@docomo.ne.jp

新しくなったスタービーチは新しいであいのカタチを提案します★ あなたに出逢いたい人がここにいます。

神待ちサイト ガールズBBSは家出少女を救う神待ち専用の掲示板です!登録無料で家出少女と出会えるチャンス

人生の勝者&敗者を容赦なく測定できてしまう勝ち組負け組チェッカー!資本主義の日本で貴方は果たして勝ち組になれているのか?あるいは…気になった人はぜひチャレンジしてみてください

高額アルバイトでは大人の恋愛を求めた風俗嬢や社長令嬢といった女性達が多数登録されております。裏風俗とまで呼ばれる逆援助の交際をあなた様も求めてみませんか

伝説で終わらせるにはもったいない。完全無料でさらに面白くなったスタービーチの再来!実績と信頼の上に成り立つスタービーチならではのであい!安心してご利用いただけるブランドだからこそ自信を持ってお勧めします

ゴージャスなリッチセレブリティ達のアダルトコミュニティーサイト!お金と時間に優雅な高級セレブ女性達はアダルトコミュで男性との秘密交際を楽しんでいるのです

変な性癖があるのはどう思いますか?私、獣姦とか好きなんです…いきなりこんな話驚きますよね…引きましたか?Σ(゜□゜(゜□゜*)野外とかにも興味があります!変態チックなのもたまにはいいですよ\(^-^ )こんな私にメールしてくれるとうれしいです。メールから色んな話しましょ(^_^)年上の人がいいです、私は27歳ですよ!それでは、メール待ってますね sasisuseso309@docomo.ne.jp

ツイッターで始まるであいの掲示板は新感覚のコミュニティ☆男女とも無料の年齢認証登録だけで即参加!!掲示板に参加後はプロフ作成やお友達検索、メッセージの交換等など多彩なコンテンツで貴方のであいをしっかりサポート

皆様お待たせしました!!伝説の出合い系サイト、スタービーチが遂に復活!あの興奮を再び体験できる!思う存分出合いをお楽しみください

精神年齢チェッカーであなたの実際の精神年齢が、簡単な質問でわかっちゃいます。普段は子供っぽいあの人も実は大人の思考の持ち主で子供っぽく振舞ってるだけかも知れませんよ

ゅめのプロフ作りました☆バレンタイン前なのにアソンでくれるヒトいなくて(泣)寂しいデス↓↓遊んでもイイヨ~ってヒト、メルちょうだいネ

今やモバゲーは押しも押されもせぬ人気SNS!当然出会いを求めてる人も多い!そこで男女が出逢えるコミュニティーが誕生!ここなら友達、恋人が簡単にできちゃいますよ

今や女の子のひとりHは常識。しかもお金を払って実際にひとりHを見てもらい、恥ずかしがるのや褒められるのが興奮のツボ!そんな彼女達とオナメールやオナ○ー救援部でHなことしてみませんか

今まで趣味とか仕事に夢中になってて気付いたら一人ぼっちで彼氏いなーい(_´Д`)恋愛からしばらく離れてたから…時々さびしくなっちゃったりするんだよね( p_q)同じくさびしーって人いる?けっこう甘えたりするところがあるから大人の人が好きだよ☆だから、年下はゴメンネ(。-人-。) メアドつけておくから気に入ってくれたらメールしてね!待ってまーす hahahanoha88@docomo.ne.jp

今、お部屋コンパがアツイ!!毎日あなたのお部屋がコンパ会場に!インターネットで即参加!招待状がなくてもスグに使えるSNSコミュニティ☆

今度のスタービーチはここがスゴイ!モバイルだけじゃなくPCでも簡単に相手を探せる!新しいスタービーチは出会いの確率がグンとUP☆

あなたの生きてきた人生の値段を診断できちゃう、人生の値段チェッカー!ここであなたが今までに生きてきた時間に値段をつけちゃおう!コンパにネタに大盛り上がり確定のツールで盛り上がろう

お金持ち人妻や熟女達は素人ホストに抱いてもらう喜びを忘れられず、性欲を満たしています。お金に困った素人男性達は当サイトで人妻さん達を抱いて高額報酬をいただいてください

グリーもそこそこ出逢えますが、であいを求めるならやっぱりスタービーチが一番!登録無料で楽しい時間を過ごしたい方にはもってこいのサイト。これで恋人、セクフレを作るチャンスが大幅アップ

これから家出したい人や現在家出している人達と、家出少女を救いたい人を繋げるSOS掲示板です

仕事や趣味に夢中になってたらいつの間にか独りきりになってた…彼氏も長いこといなくて恋愛から離れてました( ´_ゝ`)そろそろ恋愛にも夢中になりたいけど男の子とどうせっしたらいいか教えて下さい(-^▽^-) 動物好きだから、年上で動物好きな人仲良くしてね(。・w・。 ) ペット連れて散歩なんていいよね!!まずはのんびりメールからお願いします sweet-rose.perfume@docomo.ne.jp

今やモバゲーは押しも押されもせぬ人気SNS!当然であいを求めてる人も多い!そこで男女が出逢えるコミュニティーが誕生!ここなら友達、恋人が簡単にできちゃいますよ

greeやモバゲー、mixiよりアツイ!!スタービーチが新しくなって登場!!復活したスタービーチで新しいでぁいを見つけよう

セクフレ掲示板、¥倶楽部で大人の恋愛をしてみませんか?割り切ったセクフレと快楽のみを求めた恋愛をしてくださいませ

モバゲーやミクシィ、グリーでであえなかった人もNEWスタービーチなら確実にであいをゲット!無料年齢認証登録であの感動が今、再び蘇る

最も復活してほしかったサイトでダントツのNO.1であるスタービーチがとうとう復活!!以前にもましてご利用者様に満足していただける最高のシステムになっております。思う存分新しくなったスタービーチをお楽しみください

最低10万円から交際がスタートする援助スポット。不満を持つ熟女達が若い男性を求めて集まっております。熟女サークル逆¥交際であなたもリッチな生活を送ってみませんか

初めましていずみって言います☆1年半以上恋してません…(/∇≦\)元彼と付き合ってた時にあんまり会えなくて恋愛してた感じがなかった(;‾ ‾)それから臆病になってたけど長いこと彼がいないからそろそろホンキで恋したいなーなんて思ってますo(^-^o)けっこう天然入ってるから大人で引っ張ってくれる人で年上の人と仲良くしたいです☆気になった人はメールしてね fly.me.so.high-@docomo.ne.jp

満を持してのスタビ復活劇!!ここから刻まれる、新たな一コマ。スタービーチがあなたの歴史を生み出します

大人気コミュニティーサイトとなったモバゲー!であいを求める人も当然多い!!そこでであい専門のコミュニティーサイトmコミュを発信!ここでカレシ彼女をGETしよう♪

なかなか彼氏、彼女が出来ない君達の深層心理を徹底解明♪みんなでモテる度チェッカーをやって結果交換も自由、ゴウコンや休み時間はモテる度チェックで暇つぶし!次にモテ期が訪れる瞬間をズバリ診断、チャンスを逃すな

今や女の子のオナニーは常識。しかもお金を払って実際にオナニーを見てもらい、恥ずかしがるのや褒められるのが興奮のツボ!そんな彼女達とオナメールやオナニー救援でHなことしてみませんか

つい最近独り暮らし始めましたヾ(〃^∇^)ノお家で料理作っても食べてくれる人がいない(´−`)彼氏いないからあたしと過ごしてくれる人いませんか?手料理ごちそうするよぉ!よかったらメールして下さい(o~ー~)年下の人はゴメンネ…(*_ _)人メール待ってます♪september9-love9@docomo.ne.jp

PC対応!掲示板コミュニティサイトmコミュでであいを満喫しませんか?素敵なであいをあなたにお届けいたします

復活、スタービーチ!日本最大の友達探しサイトがついに復活、進化を遂げた新生スタビをやってみませんか?理想のパートナー探しの手助け、合コンパーティー等も随時開催しています。楽しかった頃のスタビを体験しよう

SM度かんたん診断、真面目なあの娘も夜はドS女王様、ツンデレなあの娘も実はイジめて欲しい願望があるかも!気になる娘の本性をSM度チェッカーで暴きだせ

ギャルは素人ホストに抱いてもらう喜びを忘れられず、性欲を満たしています。お金に困った素人男性達は当サイトでギャル達を抱いて高額報酬をいただいてください

モバゲーで合コンや婚活パーティーを開催中!イベント盛りだくさんのモバゲーを初めてみませんか

男性が主役です! 女性会員様からの謝礼は貴方次第、ご近所出張ホストは女性向けフ一ゾクの決定版!エロい一時を求めて男性様との触れ合いを秘密厳守で探しておられます。エロマダム様達と過ごす癒しの一時を…エロい人妻様会員様を満足させてあげれるのはあなただけなのです

毎日楽しい生活してても何かが足りないって思った時何が足りないか分かったの…O(≧▽≦)O それは恋愛だったんだ~って(苦笑)σ(^_^;)唯一、私にはなかったもの…彼氏がほし~い(>▽<;; フリーの人いませんか?私を恋人にして下さい♪♪仲良くなってどこかへ遊びに行きませんか?(≧▽≦;)明るい性格なので、一緒にいて楽しいと思います!!年下の人は苦手で仲良くなる自信がないので私より年上の人でお願いしますヽ(*´ー`)ゞ少しでも気になった人は今すぐにメール下さい♪ beat-angel.risa@docomo.ne.jp

突如として消えたスタービーチが電撃復帰!長い年月をかけて蘇った進化したスタービーチをお楽しみください

であえる確立NO.1を誇るスタービーチが帰ってきた!であいの要素がいっぱいつまった当サイトでであいのきっかけ掴みましょ

SM度チェッカーで隠された性癖をチェック!外見では分からない男女のSM指数をチェックして、合コンや飲み会で一気に気になる人との仲を縮めよう

アダルトSNSは今話題沸騰中!!女の子達のエッチ告白、日々のマル秘映像や写真は要チェック!!!今すぐ無料参加してアダルトSNSを始めよう

モバゲーより確実に遊べるサイト誕生!スタービーチで理想の関係を築いていきませんか

全国からメル友募集中の女の子達が、あなたの誘いを待ってるよ!無料エントリーで自由な恋愛を楽しんじゃお

彼氏いない歴2年半になろうとしてます、なかなか相性の合う人と出会えず、好意をよせてくれる人もいたけどダメだった。 一緒にいて楽しくて落ち着く人と、仲良くなって恋愛したい!!甘えさせてくれる人がいいから、年下の人は私には向いてません。よろしくね!ruri11.ko9@docomo.ne.jp

今やモバゲーは人気SNS!当然出会いを求めてる人も多い!そこで男女が出逢えるコミュニティーが誕生!ここなら友達、恋人が簡単にできちゃいますよ

モバゲーより確実に逢えるスタービーチ♪今まで遊びをしてこなかった人でも100%であいが堪能できます。理想の異性をGETするなら当サイトにお任せください

このサイトでこれからの人生が変わるかも?玉の輿度をチェックする性格診断で、ホントのあなたをズバリ分析しちゃいます!玉の輿度チェッカーの診断結果には、期待以上の意外な結果があるかも

高松宮記念の最新予想!オッズ、厳選買い目は?!レースの鍵を握る馬は裏情報を特別公開

聖なる場所スタービーチで愛を育てませんか。メル友や恋人、セクフレなど貴方が理想としている関係がスタビでは築けちゃいます。素敵なであいから発展させていきませんか

2010 競馬予想 各厩舎・調教師から届けられる最強の馬券情報を限定公開!本物の オッズ 表はコレだ

よく「彼氏いるでしょ~?」って言われるけど、イナイよσ(‾^‾)トモダチ集めてホームパーティーなんかしたりするのがマイブームでして♪♪好きな人ができてもハズかしくて告れない…(>_< )しゅりのココロをゲットしてぇ~☆コドモっぽい性格だから年上でお兄ちゃんみたいな人がタイプだよ(*^m^*) h-13-i-12@docomo.ne.jp

全国から出会いを求めて女の子達が多数登録!無料自由参加型の出会いコミュニティ

遂に復活!!スタービーチで素敵なであいをお楽しみ下さい

今や女の子のオナニーは常識。しかもお金を払って実際にオナニーを見てもらい、恥ずかしがるのや褒められるのが興奮のツボ!そんな彼女達とオナメールやHなことしてみませんか

誰でも楽しめるモバゲーの新感覚コミュニティー!ネットでもうひとつの生活を始めませんか

セレブラブなリッチセレブリティ達のアダルトコミュニティーサイト!お金と時間に優雅なセレブ女性達はアダルトコミュで男性との秘密交際を楽しんでいるのです

変わってるって言われるけどわりといい人だよ(笑)!!お笑い好きな人だったら話盛り上がりそうだねO(≧▽≦)O 色んなことに興味深々でおっちょこちょいだからそばで支えてくれる人募集中σ(゜-^*)自分の年齢的に年下の男の子はアウトだからごめんね(*_ _)人 u-3-ummm52@docomo.ne.jp

満を持してのスタービーチ復活劇!!ここから刻まれる、新たな一コマ。スタービーチがあなたの歴史を生み出します

【緊急】突如として消えたスタービーチが復活!長い年月をかけて不死鳥の如く蘇ったスタビをお楽しみください【緊急】

最近の不景気は、今一度自分の人生を見直すのに良い時期が来てます。こんな時代だからこそ人生の値段チェッカーで、人生の勝ち組に成る為のアドバイスを貰いませんか?ぱっと思い立った時こそ人生の分かれ道ですよ

Publicar un comentario